Self-Improving Pretraining as a Substrate for Agentic Post-Training

Reference Terms

- Self-Improving Pretraining uses a stronger post-trained model to supervise pretraining of the current policy. The data stream is split into a prefix and a suffix (the last N tokens). The post-trained model can rewrite the suffix into a higher-quality target or act as a judge over the original suffix, the rewrite, and K rollouts from the current policy. Reinforcement learning pretrains the policy using the judge’s reward signal.

- Online DPO (direct preference optimization) is the RL objective Tan et al. use during self-improving pretraining. For each prefix, the judge scores a candidate set (original suffix, rewrite, K rollouts) and an update favors the highest-scoring candidate as chosen and the lowest as rejected. Unlike GRPO, DPO is off-policy, so it can learn from candidates the current policy did not produce.

- Interleaved thoughts are short reasoning spans inserted by a teacher model into ordinary pretraining chunks at semantically appropriate positions. The augmented chunk alternates segments of original text with inserted thoughts, while the original content still concatenates back to the unaltered chunk. The student first learns the format through SFT and is later rewarded for thoughts that help predict the next chunk.

- RLMT (reinforcement learning mid-training) is the second mid-training phase. Each augmented pretraining chunk is split into a prefix and a ground-truth suffix. From the prefix, the model generates a thought followed by a predicted suffix. An LLM judge compares the predicted suffix to the ground-truth suffix and emits a binary reward, and the RL update maximizes that reward. The reward is on the thought-conditioned suffix prediction, not on the thought itself.

- Reward gate is the held-out evaluation that scores the same object RLMT trains on. The model generates a thought, predicts the suffix, and the judge scores the predicted suffix against the true suffix on items the model never saw during training.

Shaping Model Behavior

Modern model training is a lifecycle. A base model is pretrained, adapted through mid-training, extended for context length, taught thinking formats, preference-tuned, and then optimized with verifiable rewards.

Agentic post-training asks that model to reason through uncertainty, plan over longer horizons, use tools, revise its beliefs, and recover from mistakes. Those behaviors are usually optimized late in the lifecycle. Recent RLVR work argues that this late optimization often improves sampling efficiency more than it creates fundamentally new reasoning patterns.

Can more of that behavior be shaped earlier in the lifecycle, particularly during pretraining, so the model arrives at agentic post-training already prepared for it?

If we take ordinary pretraining text and turn it into a training environment, a prefix and suffix can become a continuation-quality task. A raw chunk can become an interleaved-thinking example. A generated thought can become useful or useless depending on whether it helps predict what comes next.

Rephrasing a pretraining corpus with synthetic data is already a known method. Here, the learning problem around the corpus also changes. Instead of treating pretraining as passive exposure to text, the training loop can ask whether the model can generate a better continuation, whether it can insert local reasoning into the text, and whether those thoughts help downstream prediction.

The direction shares ground with synthetic pretraining and active data design more broadly. Hugging Face’s Synthetic Data Playbook is a practical guide to prompt design, data mixing, small generators, and train-and-evaluate loops. Tufa Labs reports a benchmark-positive version of very-small-model synthetic pretraining. The Berkeley Sky Computing Lab and NVIDIA lecture treats it as active curriculum design. Vintage Data argues that synthetic pretraining is data design moving earlier in model development.

This experiment tested whether a small base model could be shaped earlier in the lifecycle, before agentic post-training, using the same kind of pretraining chunks it would normally only imitate.

From Pretraining Text to Training Environment

Meta’s paper from Tan et al. proposes two linked training ideas. The first is self-improving pretraining. The second is thinking mid-training.Thinking mid-training is Tan et al.’s umbrella term for the mid-training arc that adds interleaved reasoning to pretraining text. SFT teaches the thought format, and RLMT then rewards thoughts that help predict the next chunk.

Self-improving pretraining changes what the model is asked to learn from a pretraining chunk. In standard next-token pretraining, the model sees a prefix and learns to imitate the suffix that happened to come next in the corpus. Tan et al. instead treat that suffix as one candidate among several. The model generates its own continuations, a judge compares the candidates, and the training update favors the continuation judged to be better. The key behavior is not just lower loss on the original text. It is whether the model’s own rollouts start becoming good enough to beat the data suffix.

Thinking mid-training works one stage later. Mid-training is the stage between base pretraining and final post-training, where labs often adapt a model toward longer context, reasoning formats, domain mixtures, or other broad capabilities before task-specific alignment. Tan et al. use this stage to teach a model to interleave short thoughts inside ordinary text. A teacher model inserts local reasoning spans into pretraining chunks, the student learns that format through supervised fine-tuning, and then RL mid-training (RLMT) rewards the student when its generated thought helps predict the held-out continuation.

During RLMT, the model receives a prefix, generates a thought, then predicts the next suffix. A judge scores the predicted suffix against the real suffix. The thought is only useful if it helps the model predict what should come next.

The same corpus thus becomes multiple training environments. One view of the text asks which continuation is better. Another asks where local reasoning should be inserted. Another asks whether a generated thought improves suffix prediction. Raw chunks become self-improving continuation preference data, then interleaved-thinking data, then supervised thought-interface training, then RLMT.

The model generates K alternative continuations. A judge ranks them against the original suffix, and Online DPO favors the highest-scoring candidate.

A teacher model inserts short thoughts at semantically appropriate positions. The student learns the format through SFT, where the original text still concatenates back to the unaltered chunk.

The model generates a thought, then a predicted suffix. The judge scores the predicted suffix against the true continuation, and reward depends on whether the suffix is good enough.

A Small-Scale Lifecycle Experiment

I ran the adaptation on Qwen3-0.6B-Base to test whether the lifecycle shape was trainable at small scale.

The data came from FineWeb-Edu, materialized into ordinary pretraining chunks. Each chunk had a prefix and the true suffix that followed it in the corpus. That same prefix-suffix structure stayed constant across the experiment. What changed was the learning problem wrapped around it.

The first stage was self-improving continued pretraining with Online DPO. For each prefix, the model generated 16 candidate continuations. A larger Qwen judge compared the original suffix and the policy rollouts, using full-pairwise comparisons rather than a single pivot comparison. The update pushed the model toward the judged-better continuation and away from the judged-worse one.

The second stage turned the same kind of pretraining chunks into interleaved-thinking examples. A teacher model inserted short local thoughts into the text while preserving the original content. The student was then supervised on the augmented sequence, including both the original text and the inserted thoughts. This stage was not meant to prove reasoning improvement by itself. It was meant to install the interface, where thoughts appear, what they look like, and how they connect to nearby text.

The third stage was RL mid-training. The model received a prefix, generated a thought, then predicted the next suffix. A judge scored the predicted suffix against the real suffix. The reward did not directly grade the thought. It only asked whether the thought-conditioned continuation was good enough.

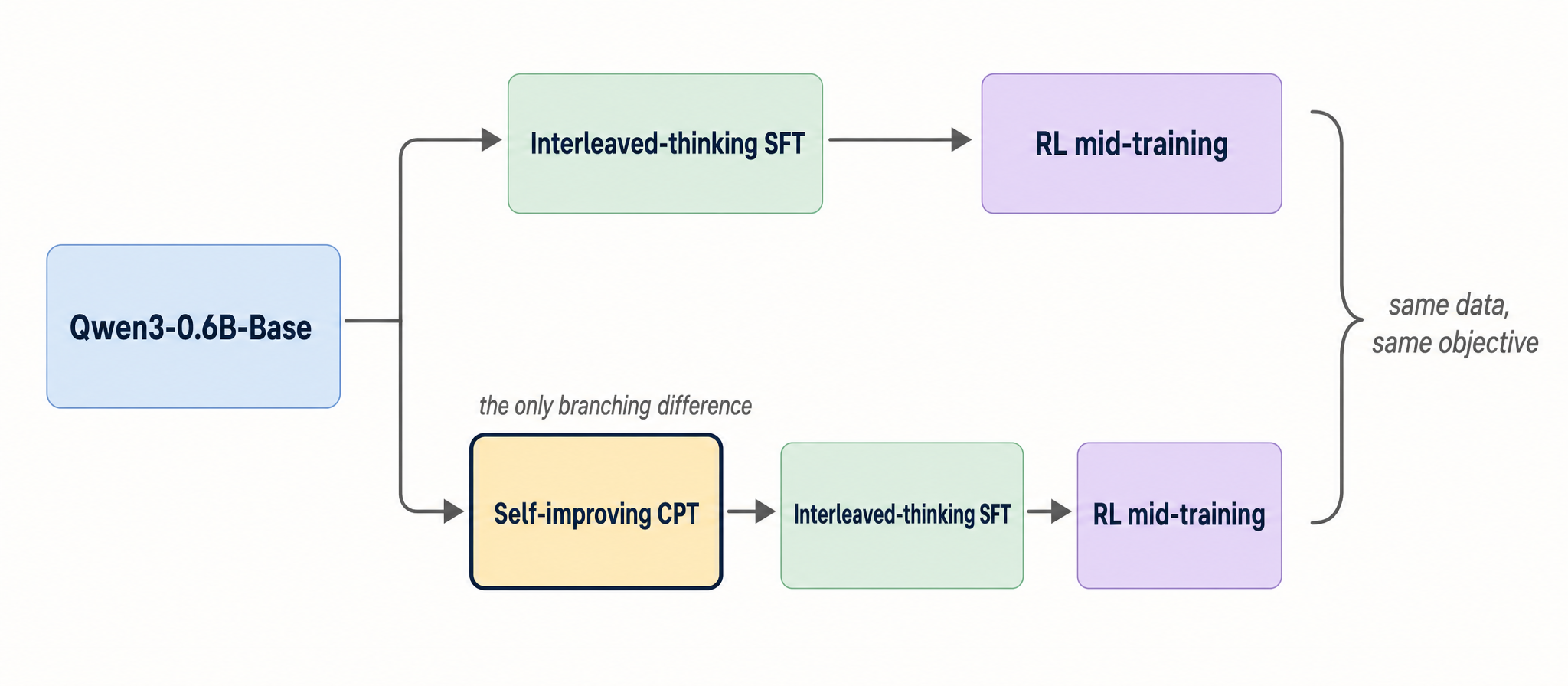

To compare, one lineage started from the original Qwen3-0.6B-Base. The other started from the self-improved checkpoint. Both were trained on the same interleaved-thinking data, then both went through the same RLMT setup. Did self-improving pretraining make the model more receptive to later thinking mid-training?

The comparison isolates whether self-improving continued pretraining changes the model’s response to later thinking mid-training. Both lineages use the same interleaved-thinking data and RLMT objective.

| Stage | Input | Learning problem | Output |

|---|---|---|---|

| Self-improving continued pretraining | FineWeb-Edu prefix and suffix chunks | Choose between the original suffix and K=16 policy rollouts using full-pairwise judge comparisons and Online DPO | A self-improved base checkpoint |

| Interleaved-thinking SFT | The same kind of raw chunks, augmented by a teacher | Learn to reproduce original text with short local thoughts inserted | A model that can use the thought interface |

| RL mid-training | Prefixes from the augmented corpus | Generate a thought, predict the suffix, and receive reward from suffix quality | A model optimized through a sparse thought-conditioned reward |

| Evaluation | Held-out prefixes and reasoning tasks | Separate continuation quality, reward-object performance, downstream reasoning, and causal thought use | Evidence about where the lifecycle worked and where it remained immature |

One earlier from-scratch run shaped this design. A 270M scratch model was too weak for its own rollouts to compete with the data suffix. Self-improving pretraining needs a starting model whose samples are already plausible enough for the judge to sometimes prefer them.

Results

Each lifecycle stage has its own primary measurable, separated below by stage.

Continued pretraining improved judged continuation quality

The continued-pretraining stage produced the first clean signal. On held-out prefix-suffix chunks, the self-improved checkpoint beat Qwen3-0.6B-Base on 81 of 128 pairwise continuation judgments, a 63.28% win rate.

The training dynamics moved in the same direction. The rate at which the judge selected a policy rollout rather than the original suffix rose from 62.5% to 74.6%. The DPO marginDPO margin is the average log-probability gap between chosen and rejected continuations across training pairs. A larger margin means the policy is more strongly preferring the judged-better continuation. rose to 0.19. The model’s own continuations became more competitive with the data suffix during training.

Raw suffix NLL moved the other way, from 2.56 to 2.60. Online DPO was not optimizing exact imitation of the original suffix. It was optimizing judged continuation quality, and on that objective the base model became better at producing continuations a judge preferred.

SFT installed the thought interface

The interleaved-thinking dataset had the shape needed for the next stage. A teacher inserted short local thoughts into ordinary pretraining chunks while preserving the original text. The final dataset had 8,704 rows, with 8,192 train rows and 512 validation rows. It averaged 4.39 thoughts per row, had zero malformed rows, and preserved the raw text closely, with average raw word coverage of 99.98%.

SFT over this dataset changed the model’s ability to model thought tokens. Thought NLLThought NLL is the negative log-likelihood the model assigns to the inserted-thought tokens specifically, measured separately from the surrounding original text. Lower means the model is fluently producing thoughts in the trained format. dropped from 4.24 for the raw baseline to 3.16 for the base model trained on interleaved thoughts and 3.14 for the self-improved model trained on interleaved thoughts.

SFT installed the interface. It did not by itself answer whether thoughts were useful. The pre-RLMT comparison between the self-improved thinking model and the base thinking model was exactly tied at 64-64, which made RLMT the next test.

RLMT made the interface rewardable

The post-RLMT reward gate evaluated the same object RLMT trained on, generate a thought, predict the suffix, and judge the predicted suffix against the true suffix. The base thinking model had a reward mean of 0.088. The self-improved thinking model reached 0.091. After RLMT, the base lineage reached 0.094 and the self-improved lineage reached 0.098, the highest of the four.

The downstream reasoning eval was mixed. I evaluated GSM8K, MATH-500, GPQA-Diamond, and OlympiadBench with eight samples per problem. On macro Mean@8Mean@8 is the average correctness rate across 8 samples per problem. It asks how often an average sample is correct., the self-improved thinking model was strongest before RLMT at 0.175, while the self-improved RLMT model fell to 0.166. On macro Pass@8Pass@8 counts a problem as solved if any of 8 samples is correct. It asks whether the model can solve the problem within an 8-sample budget., the self-improved RLMT model was highest at 0.512.

Mean@8 and Pass@8 split here. At this scale, the reward-object result was cleaner than the downstream benchmark result.

Causal Behavior Analysis

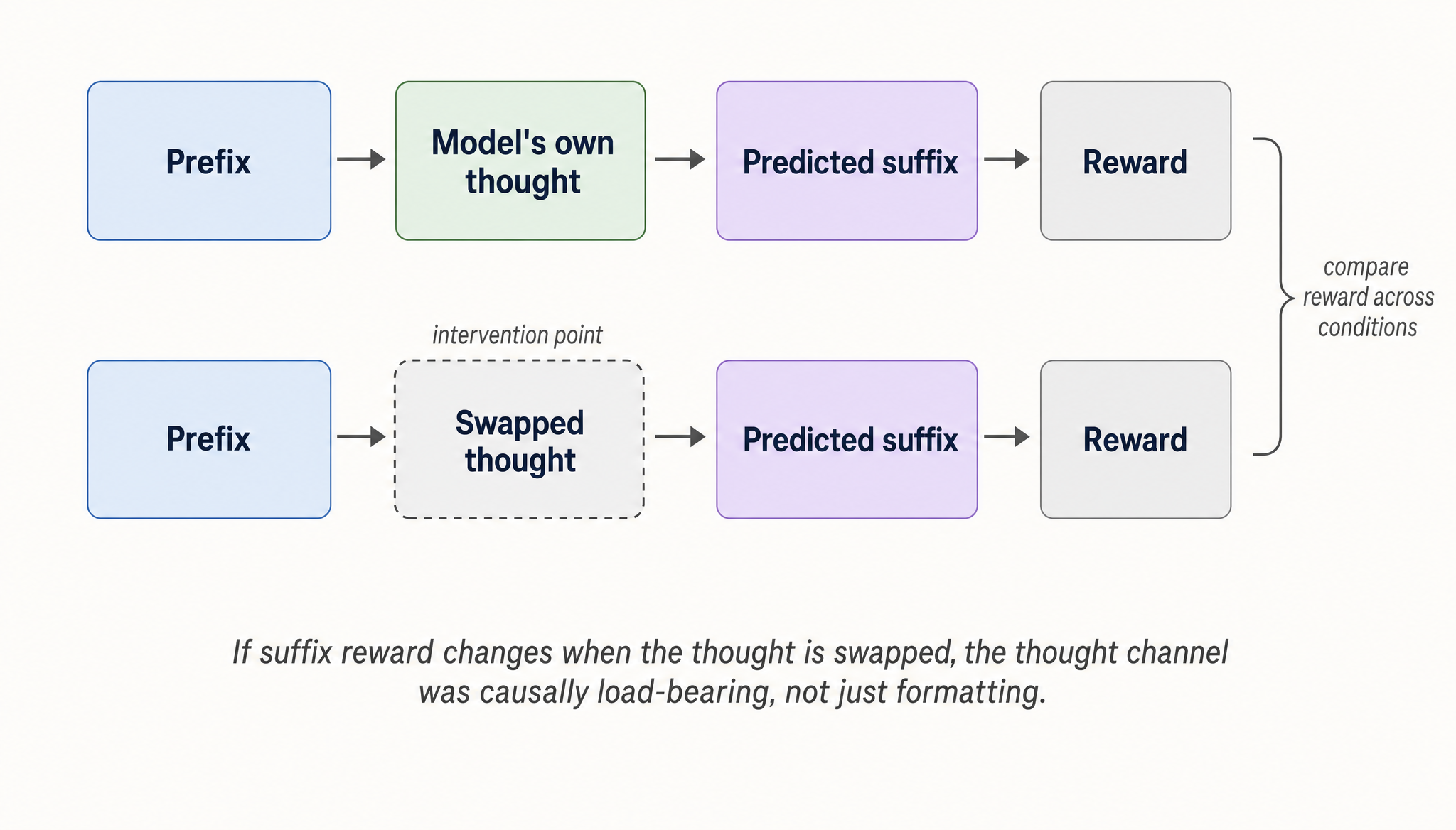

To move beyond aggregate reward, I ran a causal thought-use probe. The intervention was simple. Replace the model’s generated thought before suffix generation, then measure whether suffix reward changes. This tests whether interleaved thoughts are just formatting, or whether they actually steer continuation behavior.

Swapping in an unrelated thought sharply reduced suffix reward across all arms, down to reward means between 0.016 and 0.023. That means thought text was not decorative. It controlled behavior. The thought channel had become a real handle on the model’s continuation policy, not just a tag format learned during SFT.

Blank and generic thoughts often matched or beat the model’s own sampled thoughts. Given the 0.6B scale, sparse reward, and short RLMT run, the model learned a thought-conditioned interface before it learned to reliably generate the best thoughts for that interface. The positive result is causal sensitivity.Causal sensitivity means that changing the thought text changes the model’s later continuation behavior under the same prefix. Here, unrelated thoughts sharply reduced suffix reward, so the thought text was not just formatting. The unresolved problem is thought quality.

| Stage | Primary evidence | Interpretation |

|---|---|---|

| Continued pretraining | 81/128 held-out pairwise continuation wins over Qwen3-0.6B-Base | The self-improved checkpoint produced better judged continuations |

| Interleaved-thinking SFT | Thought NLL dropped from 4.24 to 3.16 and 3.14 | SFT installed the thought interface |

| RLMT reward gate | Self-improved RLMT reached the highest reward mean at 0.098 | RLMT made the interface rewardable under the suffix-prediction objective |

| Reasoning eval | GSM8K, MATH-500, GPQA-Diamond, and OlympiadBench moved differently across Mean@8 and Pass@8 | Downstream reasoning did not improve uniformly |

| Thought-use probe | Swapped thoughts reduced reward to 0.016-0.023 | Thought text became a causal behavioral control surface |

Implications for Training

Small language models are usually framed as vertical tools. They work when the task is narrow, the domain is bounded, and the behavior is easy to specify.

A small language model can be specialized not only for a domain, but for the behaviors later training will need to refine.

Tan et al. argue that reasoning should be trained earlier in the lifecycle because raw pretraining text does not expose the intermediate reasoning behavior later post-training has to optimize. I read this experiment as a small-scale version of that idea. The model learned a continuation preference, a thought interface, and a rewardable suffix-prediction loop. The thought channel then became behaviorally causal.

The question is not only whether a small model can solve a vertical task. It is whether early training can make the model easier to turn into a reliable agent later.

For scientific and engineering agents, the relevant behaviors are often not captured by today’s benchmarks. Planning, revision, tool use, verification, and recovery are lifecycle behaviors. If those behaviors are bounded by what the base model can express, then the place to shape them is earlier than we usually do.

Limitations and Closing

This is a small-scale adaptation of Tan et al., not a full reproduction. The experiment uses Qwen3-0.6B-Base, a short RLMT run, and a smaller evaluation budget than the original paper. The right comparison is therefore the structure of the training signal, not the benchmark magnitude.

The main limitation is scale. The continued-pretraining result is a judged continuation-quality result, the RLMT reward gate measures the paper-aligned suffix-prediction objective, and the downstream reasoning evaluation is mixed across benchmarks and metrics. These results support the claim that the lifecycle can be made trainable at small scale. They do not support a claim that this model learned a mature agentic reasoning policy.

The thought-use probe should be read the same way. Swapped thoughts reduced reward, so the thought channel mattered behaviorally. Blank and generic thoughts often matched or beat sampled thoughts, so the model had not learned to reliably generate the best thoughts for that channel. That is a limitation on the learned policy, not on whether the interface existed.

Ordinary pretraining text can be turned into a sequence of training environments before agentic post-training begins. Continued pretraining can select better continuations, interleaved SFT can expose a thought interface, and RLMT can make that interface rewardable. For small language models, that suggests a path beyond narrow vertical specialization. Train the behavioral interface earlier, then let later post-training refine it.

The experiment is small, but if later post-training is bounded by the behaviors a base model can express, then some of the most important work belongs earlier in the lifecycle.